Humans and the Atom: A Disaster in the Making

Twenty eight years ago this past April, the Chornobyl disaster forever changed our world. In one massive mistake we rendered a swath of Ukraine and Belarus unlivable for hundreds of years, and unleashed a plume of radioactive contamination across Europe. Attempts to cover up the disaster was one of many catalysts for the Soviet Union's eventual dissolution. But in a more global sense, nuclear power had held so much promise for us. Scientists of the Atomic Age were incredibly optimistic, yet we would eventually find out that although powerful, nuclear technology was incredibly dangerous.

Humankind's perilous liason with nuclear technology began forty-four years before the Chornobyl disaster, when the first artificial self-sustaining nuclear chain reaction occurred at Chicago Pile-1 - the world's first nuclear reactor. That discovery was the first step to the Manhattan Project, the United States' race to develop a nuclear bomb. Our subsequent entrance to the Atomic Age was almost blind - no one knew exactly what would happen during the first atomic bomb test, code-named Trinity, in the New Mexican desert. Debate amongst Manhattan Project scientists ranged from the bomb failing and doing nothing at all, to the extremely minute possibility that it would ignite the Earth's and cause unprecedented destruction. The debate was settled at 5:29 AM when the bomb was dropped, with an audience standing in the desert to watch the grand conflagration. The resulting bright white flash was both terrifying and awe-inspiring - humans had harnessed the atom.

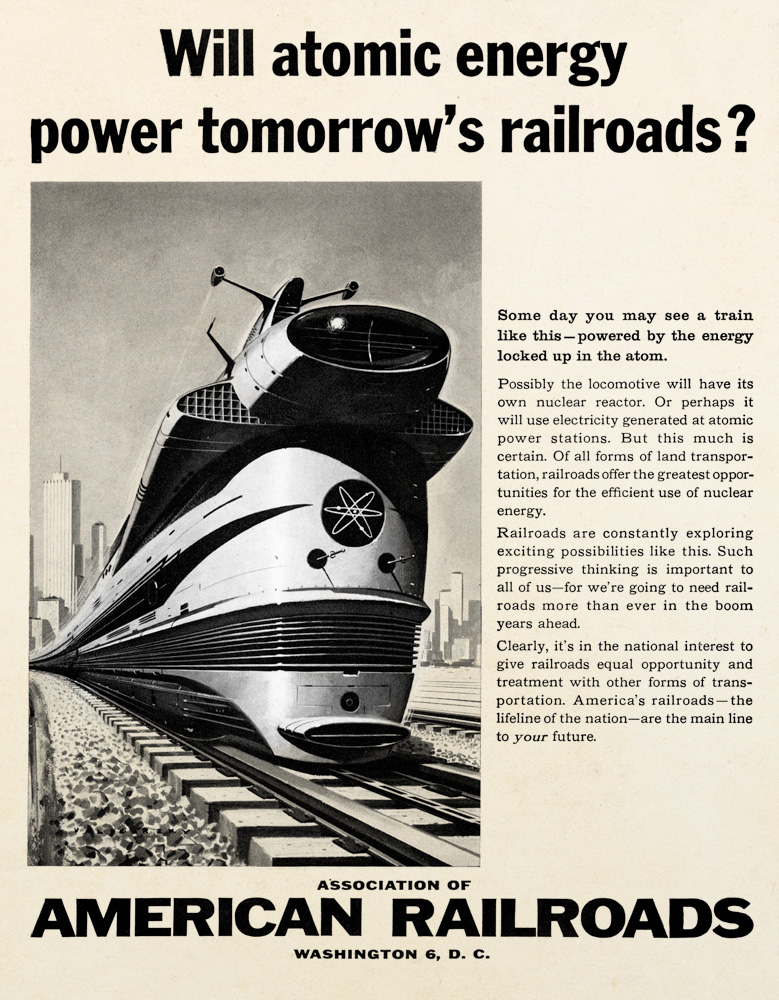

Hidden within radioactive elements like uranium and plutonium is the ability to sustain a powerful nuclear chain reaction. These chain reactions release large amounts of energy, which could cause destruction in the form of bombs, but could also be used in a peaceful capacity as an energy source. Optimism of the day led to fanciful dreams of nuclear power plants across the globe, and nuclear powered submarines, planes, trains, and even automobiles. Idealists envisioned a world where we no longer needed to mine coal, and gas stations would be a thing of the past. Many of these ideas were promoted by scientists who had played parts in the development of atomic bombs - they wanted their legacy to not be one of destruction, but to be something more positive. Some of the ideas, however, were resounding failures. The American military invested over a billion dollars to create a nuclear powered airplane, but the idea was never quite feasible. Both nuclear submarines and power plants, however, proved to be worthwhile investments, and were actively produced by the United States and the Soviet Union.

Although there was much optimism, nuclear technology has a very dangerous side effect - ionizing radiation. Humans were at least cursorily aware of the phenomenon - x-rays, discovered by Wilhelm Roentgen in 1895, were known to cause burns on the skin. Radium, discovered by Marie Curie in 1898, became a fad because it glowed. But the serious effects of that glow slowly became clear as factory workers that coated watch dials with radium paint became greviously ill and died. It wasn't until nuclear bombs were dropped on Hiroshima and Nagasaki that we truly got a sense of the real terrors of nuclear technology, and radiation's serious effects on living things. Citizens that survived the initial bomb blast died slow, agonizing deaths from a combination of nausea, vomiting, diarrhea, hair loss, fever, and hemorrhaging - all the hallmarks of radiation sickness. Those that survived those side effects were plagued with nuclear-induced illnesses and cancers for the remainder of their lives. Many at Chornobyl would suffer a similar fate.

Despite the slowly mounting evidence of radiation's damaging effects on the body, humans retained a cocksure attitude towards the risks of nuclear technology. In two seperate incidents, Manhattan Project scientists performing unnecessarily risky tests made drastic mistakes and were fatally irradiated by a core of plutonium. They were the first casualties of high doses of hard radiation from a nuclear core. A similarly brash attitude was prevalent in Great Britain - during construction of the air-cooled Windscale reactor, chimney filters to prevent nuclear particles from being released into the atmosphere were deemed a waste of both money and time. Scientist John Cockcroft insisted on the installation of the filters, which gave rise to the derogatory name "Cockcroft's Folly." When the reactor caught fire in 1957, these filters saved the environment from a catastrophic release of radiation, vindicating Cockcroft. One year later, a chemical operator in New Mexico was fatally irradiated by plutonium in a mixing tank. Such an accident was thought to be impossible, yet in under a second the man absorbed such a massive dose of radiation he was dead in less than 48 hours.

Where the New Mexican and Nevadan deserts was the testing ground for nuclear bombs, the Idaho desert became the proving ground for America's nuclear reactors. It was here that the Army's SL-1 reactor exploded in 1961, killing its three operators and heavily polluting the surrounding area. One of the operator's corpses was so thoroughly contaminated that parts could not be returned to the family for burial - they were instead entombed in the desert as nuclear waste. The SL-1 accident was only the second most serious nuclear event to ever happen in the United States, behind the 1979 Three Mile Island accident. All of these events proved powerful reminders of the might of nuclear technology, and the danger of radioactivity. Such factors played an integral part in turning popular reaction against nuclear power, a stark contrast to the boundless optimism of the early years of the nuclear age.

As the west slowly awoke, the Soviet Union retained their cocky attitude towards nuclear technology, which was reinforced by government propaganda. The "peaceful atom" was completely safe, and nuclear accidents only happened in the west, were both oft repeated adages. Evidence of the contrary, which were plentiful, were thoroughly covered up, and dissention suppressed. Few, even those working in the nuclear power industry, were ever aware of the partial meltdown at the Leningrad Nuclear Power Plant in 1975, or even the massive explosion of nuclear waste from the Mayak plant that resulted in the Kyshtym Disaster. That 1957 disaster rendered parts of Chelyabinsk oblast into some of the most polluted spots on Earth, and uninhabitable for years - surely a foreshadowing of events to come.

Although there are many risks, nuclear power does generate electricity without releasing the greenhouse gases that result in global warming. By the 1970's, nuclear power plants were gaining popularity, partially for this reason. Soviet leader Mikhail Gorbachev hoped that nuclear power would trigger rapid growth. With nuclear power revered as such, it is unsurprising that men fought to be appointed to positions where they would oversee these new plants. Unfortunately, many men appointed to top spots were looking only for the money and prestige, and had few qualifications for overseeing nuclear power plants. With backgrounds in hydro or thermal powered plants, they were unfamiliar with the nuances of nuclear technology, and often made decisions where safety was sacrificed to save money and meet unrealistic deadlines. Many falsely believed that nuclear reactors were but simple machines, no more complicated than an average samovar - a gross oversimplification of the skilled work required to properly operate a nuclear power plant. Unfortunately, it was this lax culture and the belief that reactors were utterly safe that led to carelessness. Coupled with the typical Soviet response to any accident - covering it up - ensured that no one could actually learn from mistakes, and created a ripe environment for disaster.